The Copilot Era is Over

Copilots dropped the friction of finding information but left the ‘doing’ to humans. Discover why 2026 is the year B2B revenue teams move from reactive chatbots to proactive AI agents.

TL;DR

- The Copilot era solved the problem of access to information, but it created a new bottleneck: action.

- Copilots are interrogative; they wait for a human to ask a question and then hand the manual work back to the user.

- While manageable for 10 accounts, the Copilot model breaks down at 100+ accounts, as the volume of signals outpaces the human capacity to "chat" with a tool.

- Unlike Copilots, Agents are proactive, they use your proprietary first-party data to monitor the pipeline 24/7, drafting outreach and enriching CRM records before a human even opens their laptop.

- We’re moving from a world of "AI as a research assistant" to "AI as a participant" in the revenue team.

Somewhere in your company right now, a revenue rep is doing something that would look absurd if you described it out loud: they have found a high-intent account, confirmed it fits the ICP, and established that the timing is right, and now they are switching between four tabs to write an email, manually adding the account to a LinkedIn campaign, and making a note to ask someone in RevOps to enrich the CRM record when they get a moment. The intelligence part took thirty seconds. The doing-something-about-it part will take most of the morning.

This is the gap that AI was supposed to close. And for a while, the category that emerged, copilots, assistants, and chat interfaces built on top of your data, looked like it was closing it. You could ask your pipeline a question and get a clean answer. You could surface an intent signal without writing a SQL query. It felt like ✨magic✨ (until it didn’t). The friction of getting to information dropped dramatically, and that felt like progress because, for a time, it was.

But there is a version of progress that solves one problem so visibly, it obscures the problem it leaves untouched. Copilots made it easier to know things, but they did almost nothing about what happens after you know them.

The half-solved problem that nobody wanted to name

The promise of AI in B2B has always been about reclaiming time, giving revenue teams back the hours they spend stitching together data, interpreting signals, and producing reports that are outdated before they are shared. And copilots delivered on part of that promise. Ask the right question, get the right answer, and move faster. That part worked.

What it didn’t account for is the actual work that begins after the question has been answered.

In practice, the bottleneck for most revenue teams is not only finding the answer. It is the chain of actions that the answer is supposed to trigger. A rep learns that a key stakeholder just changed roles at an open deal (a great signal and genuinely useful). But now, they have to write personalized outreach for every contact in the account, update the deal record, adjust the sequence, fire the LinkedIn campaign, and probably brief their manager before the next forecast call. The insight arrived in seconds, but the work it created will take hours.

Copilots, by design, hand the work back to you.

They were built on the assumption that a human will always be in the loop to interpret every answer and decide what to do next. That assumption made sense when the alternative was doing all the research manually, too. It makes much less sense now that we know the research can be automated, because it turns out the research was never really the hard part.

Copilots made it faster to know things, but what revenue teams actually needed was for things to happen.

What happens when you scale the Copilot model? It breaks

The copilot approach is forgiving when your pipeline is small. When you have a handful of accounts to think about, the human handoff between answer and action is annoying but manageable. A rep can take the signal, process it, and respond within a reasonable window. The gap between knowing and doing is measured in minutes.

Scale that up to fifty accounts, and the gap starts to widen. At a hundred accounts, it becomes structurally unsustainable. Because the volume of signals doesn’t grow linearly with the number of accounts, it compounds. More accounts mean more intent signals, more stakeholder changes, more website visits, more campaign interactions, and more churn risks surfacing simultaneously. A copilot that answers questions one at a time cannot keep up with a pipeline that continuously generates signals. And the signals that go unacted upon aren’t a minor inefficiency. These are the deals that go cold while your team is busy processing the signals they managed to catch.

Note: This is not a criticism of the companies that built copilots. It is a recognition that the category solved a genuine first problem (access to information) and that solving it has now made the second problem impossible to ignore. The question is whether the model of a human asking questions and then executing the answers manually is the right one for where we are now.

The shift that is already underway

The teams that have moved furthest in this direction are not waiting for someone to notice a signal and ask the right question. They’ve started building systems that continuously monitor their pipeline and act on what they see, without needing to be prompted.

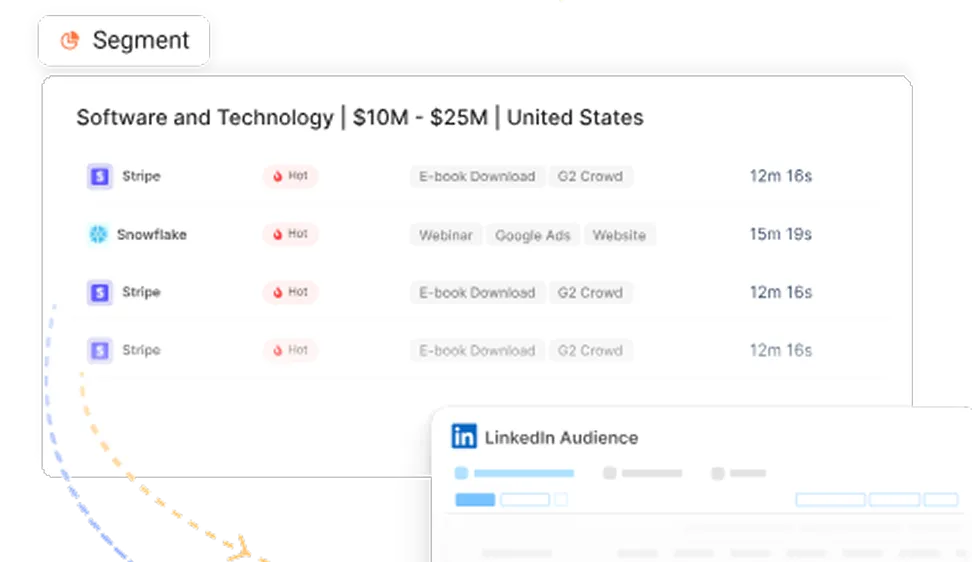

Let’s take an example: a high-intent account appears in the pipeline, outreach is drafted for every contact, the LinkedIn campaign fires, the CRM record gets enriched with the latest funding round and hiring signals, and the rep gets a briefing rather than a task list. The system doesn’t wait for someone to type a lengthy prompt while it waits. Instead, it moves, and it moves before your team figures out where to start.

This way of thinking is different because it asks, ‘What is AI for?' but in a revenue context. The copilot model is interrogative; you ask it questions, and it gives you answers. What is emerging now is continuous and proactive; the system watches your pipeline the way a very attentive colleague would, surfaces what matters before you think to ask, and, in an increasing number of cases, has already started acting on it by the time you look up.

It’s important to note that the difference is not the quality of the intelligence because both systems know the same things about the account. The difference is what the intelligence does next and whose time it consumes getting to that point.

Why does first-party data change everything about this?

One of the underappreciated reasons the copilot model persists is that most AI tools are still working from third-party data: generic signals scraped from the web, intent data aggregated from browsing behavior across the whole market, and enrichment pulled from sources that every competitor also has access to. When your intelligence is the same as everyone else's, the advantages you can extract from it are limited. The value is in the speed of access and the depth of the signal.

First-party data changes the equation entirely. Your CRM history, your website behaviour, your ad engagement, your G2 intent signals tied to specific accounts that already know you; this is context that no third-party source can replicate, because it is a record of the specific relationship between your company and your accounts. An AI system that is grounded in this data is not working from the same signals as your competitors. It is working from something genuinely proprietary, and its actions are proportionally more targeted as a result.

This is why the shift from the Copilot to Agent model is an important theory of what makes AI valuable in a B2B context. Copilots are more valuable when the data is richer, but they still ultimately depend on a human to act on what they surface. Agents that are grounded in first-party data and built to act continuously are compounding advantages in a way that copilots structurally cannot.

Your first-party data is the one thing your competitors can’t copy. An agent built on it is a compounding and competitive advantage.

What does this actually look like in practice?

The teams making this transition are not ripping out their existing stack and starting over. They are changing where the work happens. Research that used to happen in a tool now happens in an agent that runs before the rep opens their laptop. Reports that used to be built manually on a Tuesday afternoon are now auto-generated from live data and ready to share before the meeting starts. Signals that used to get missed because nobody happened to check at the right moment are now surfaced automatically, with the recommended action already attached.

The practical effect is that the work itself changes shape and becomes efficient by itself. Less of it happens in response to questions. More of it happens in response to things the system has already figured out. Pipeline reviews become conversations about what to do next rather than investigations into what happened. Sales calls start with context rather than with a rep scrambling to remember where they left off. Churn risks surface before the renewal conversation, not after.

None of this requires a different kind of data. It needs a new relationship with your existing data, one in which the system constantly works with it instead of waiting for a request.

What’s next?

Copilots were not a mistake. They were the right first step for a category that needed to prove that AI could work reliably with business data before it was trusted to act on it. That proof has been made. The next question is not whether AI should be doing more of the work; most teams that have used a copilot for a year will tell you the answer is obvious. The question is what the architecture looks like when the goal is action rather than answers.

The answer emerging is a system that starts with your first-party data, continuously understands your pipeline rather than on demand, and closes the loop between signals and actions without requiring a human to serve as the bridge. Something closer to a very capable, very fast, permanently attentive member of your revenue team.

The Copilot era established that AI belongs in the revenue stack. What comes next establishes what it is actually there to do.

Scout is Factors' answer to this ✨new era✨. Built on your first-party data, it's already running before you ask for anything.

Learn more at Factors - Scout.

Frequently Asked Questions (FAQs) for the Copilot era is over: why are B2B teams shifting to AI agents

Q1. What do you mean by the ‘Copilot era’?

The Copilot era was the first phase of AI in B2B, where tools helped you ask better questions and get faster answers. They reduced the effort required to find information, but they still depended on a human to decide what to do next.

Q2. Why does the Copilot model break at scale?

Because signals grow faster than your team’s ability to process them. As your pipeline grows, so do intent signals, stakeholder changes, and engagement data. A system that waits for you to ask questions cannot keep up with a constantly changing pipeline.

Q3. What is different about the Agent model?

Agents do not wait for prompts. They continuously monitor your data, identify what matters, and take the first steps automatically, whether that is drafting outreach, updating CRM records, or triggering campaigns. The goal is to reduce the gap between signal and action.

Q4. Why does first-party data matter so much here?

Most tools rely on third-party data that everyone has access to. Your first-party data, like CRM history, website behavior, and campaign engagement, is unique to your business. Agents built on this data can act with far more precision because they understand your actual relationship with each account.

Q5. Does this approach mean humans are no longer needed in the process?

Not at all. The role of the human shifts. Instead of spending time on research and manual execution, teams start with context and focus on decisions, conversations, and closing deals. The system handles the groundwork so the team can move faster.

Q6. Do you need to replace your entire stack to adopt this?

No. The shift is not about replacing tools; it is about changing where the work happens. Instead of manually pulling data and acting on it, the system starts doing that work in the background using the data you already have.

Q7. Where does Scout fit into this?

Scout is built for this exact shift. It uses your first-party data and connects signals to actions, so your team doesn't have to start over every time something changes.

See how Factors can 2x your ROI

Boost your LinkedIn ROI in no time using data-driven insights

See Factors in action.

Schedule a personalized demo or sign up to get started for free

LinkedIn Marketing Partner

GDPR & SOC2 Type II

.avif)

.svg)

.avif)

.svg)