Never had more data, never been more lost

78% of B2B teams use AI, but only 19% see revenue impact. Read about why the ‘data problem’ in 2026 is a timing issue and how to bridge this gap with Scout.

78% of B2B teams have adopted AI in some form. 19% can point to a real revenue impact. Which one are you part of?

TL;DR

- Most teams have a decision timing problem; the signal exists, but it shows up too late to change anything.

- AI has mostly been used to answer questions faster, while the real bottleneck sits in what happens after the answer.

- The gap between adoption and revenue impact comes from workflows staying the same, even as tools get smarter.

- High-performing teams stop treating AI as a search layer and start using it to continuously watch, prioritize, and nudge action.

- The shift happens when signals don’t wait for humans to go looking for them; they surface on their own with clear next steps.

- When that happens, pipeline movement becomes less reactive and a lot more intentional.

Nobody woke up one day and said, “let’s build a data problem🙂”. Every tool your revenue team adopted was a reasonable decision made at a reasonable time: a CRM to track deals, an ad platform to run campaigns, a BI tool to make sense of the numbers, and an intent tool to find accounts in-market. Each one solved a real problem (or so it promised). And each one, without anyone planning for it, became another place where data lives, one nobody is fully responsible for connecting to anything else.

What happens next? Revenue teams (objectively overwhelmed by information) consistently find it challenging to address the most critical questions, such as:

- Which accounts deserve attention right now?

- Why is this deal moving slowly?

- What actually drove the pipeline last quarter? Was it the campaigns, the events, the outbound, or something else entirely?

These are not exotic analytical questions; they’re the questions that should take thirty seconds, and for most teams, they still take three hours, a Slack thread, and at least one 30-minute meeting.

This is the real shape of the data problem in B2B in 2026: a fundamental disconnect between the information being collected and the decisions it is supposed to support.

Here’s a stat that should make you a little uncomfortable

78% vs 19%

AI adoption across B2B teams vs. teams that can point to meaningful revenue impact from it.

Sit with that gap for a moment, because it tells a more specific story than it first appears to. The 78% figure means that the question of whether AI belongs in the revenue stack is essentially settled; teams have made their bets, and most of them have made the same one. The 19% figure means that the vast majority of those bets have not yet paid off in any measurable way. Note: This is not about AI failing, per se, but about how AI has been deployed.

Most B2B teams have adopted a similar model under the AI banner: you pose a question, and the tool provides an answer. Chat interfaces layered on top of CRM data, natural language queries against dashboards, and assistants that can summarize a deal or draft an email if you give them the right prompt. These are genuinely useful capabilities; they’re also, in a structural sense, the same workflow as before, just with a smarter search engine in the middle. You still have to know what to ask, interpret what comes back, and decide what to do with it.

The 59-point gap between adoption and impact is, to a significant degree, the cost of that structural limitation. Teams adopted the tools and then discovered that making them work still required the same human judgment and manual effort as before. The tools got smarter, but unfortunately, the process didn’t change.

Adding AI to a broken workflow does not fix the workflow. It just means you reach the same bottleneck faster.

The way data actually fails teams (and it is not what most people think)

The failure mode most people describe when they talk about data problems is inaccuracy: dirty CRM records, unreliable attribution, and intent signals that don't map to real buying behavior. Those problems are real and worth solving, but they’re not the primary reason that 59% of AI-equipped teams are not seeing revenue impact, because you can have perfectly clean, perfectly accurate data and still have the same problem.

The more common failure mode is timing.

Your CRM knows which deals are open; your website knows which accounts visited the pricing page three times this week; your ad platform knows which contacts engaged with the campaign’ your G2 data knows which accounts are researching your category right now. All of that is accurate and ALL of it is sitting somewhere, correct and unconnected, waiting for someone to pull it together and do something with it.

By the time that happens, by the time the SDR opens the account, by the time the marketing manager pulls the engagement report, and by the time RevOps finishes the attribution analysis, the moment has passed; the ships have sailed off the coast, probably even anchored.

Either the account that researched alternatives three days ago has moved on or someone faster has reached them. The deal that showed early churn signals two weeks ago has already started to slip. The signal was right, but the timing was wrong. And the reason the timing was wrong is that the signal had to wait for a human to go looking for it.

This is the data problem, as it actually exists for most B2B revenue teams (not a shortage of information). A systematic failure to get the right information to the right person at the moment it would change what they do.

Why didn’t the obvious fixes fix it?

- The first wave of responses to this problem involved creating more dashboards.

If the issue is that people can’t see the data, build better visualizations and give everyone access. This helped at the margins and didn’t solve the underlying issue, because the problem was access to the data in practice, not in principle. Accessing it required switching tools, knowing what to look for, and taking time that most revenue team members don’t have between the task they just finished and the next meeting. - The second wave was better integrations.

Connect the CRM to the ad platform, the ad platform to the BI tool, and the BI tool to the intent data. This was closer to the right instinct but ran into a practical reality: integrations are a RevOps project; they break; they require maintenance; and they still produce data that someone has to interpret and act on. The loop was tighter this time, but it was still a loop that required a human to close it. - The third wave (the current one) is AI assistants.

These, as discussed, are genuinely useful at the task of answering questions but leave the fundamental structure of the workflow intact. You still have to show up with a question and have to do something with the answer. The AI is a faster research assistant. But again, the problem was never the speed of the research.

Every solution to the data problem so far has made it easier to access to the information. None of them changed what happens after you arrive there.

What are the 19% doing differently?

The teams that have moved from AI adoption to AI impact are not, by and large, the ones with the cleanest data or the most sophisticated tooling. They’re the ones who changed what they expect the system to do. Instead of building AI into the workflow as a smarter tool for humans to query, they have started building it as a participant in the workflow. This participant watches the pipeline continuously, surfaces what matters before anyone asks, and, in an increasing number of cases, takes the first action rather than waiting for a human to decide.

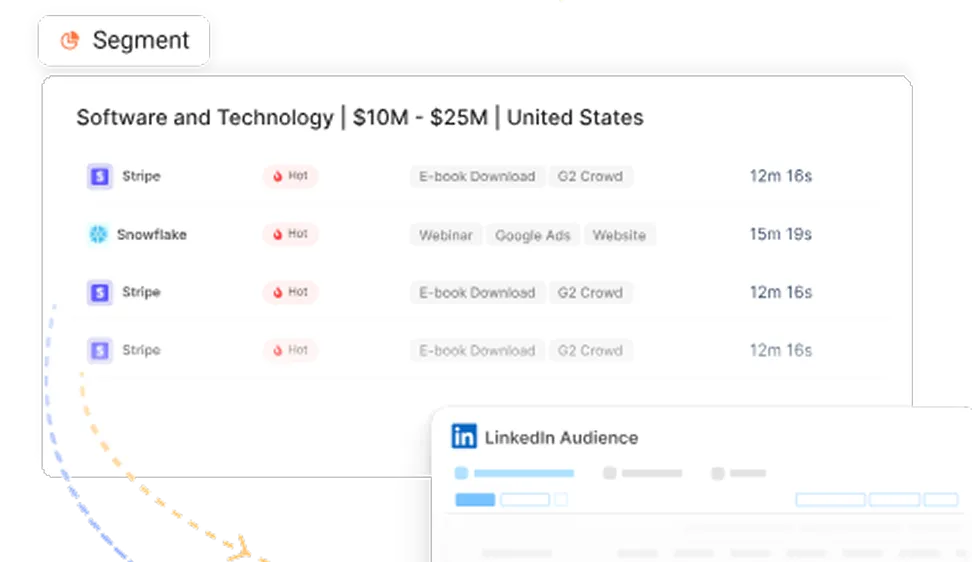

In practice, this looks like a rep starting their morning with a ranked list of accounts that need attention today, each with a specific reason and a recommended next step, because the system identified it overnight. It also looks like a CMO walking into a pipeline review with attribution already assembled and the key questions already answered, rather than spending the first twenty minutes of the meeting pulling numbers together. It looks like a churn risk surfacing in Slack with the relevant account history and a suggested action three weeks before the renewal conversation, rather than the day before it.

The common expectation is that the system's job is to ensure the right actions occur without anyone needing to remember or recommend them.

So, what actually closes the gap?

Closing the gap between the 78% and the 19% requires being honest about what that gap actually represents. It’s not really a gap in data quality or AI capability, but a gap between what teams have built, systems that respond to questions, and what they actually need, which is systems that participate in the work without needing to be prompted.

The data your business has already collected is (in most cases) sufficient to do this. Your CRM history and deal movement, your website engagement and campaign performance, and your intent signals tied to real accounts; all of it already exists, and most of it is already accurate enough to act on. The missing piece is a system that treats the data as something to work from continuously rather than something to query occasionally.

Here’s what changes: the system continuously monitors the pipeline, surfaces signals, and connects the dots across tools; questions that currently take thirty minutes will take only thirty seconds. And the signals that currently get missed because nobody happens to check at the right moment will no longer be missed, because the system eliminates the "right moment." It is always checking. All this is possible because the work of assembling the answer happened before anyone thought of involving a real human in this whole process.

That’s not a far-fetched vision of where B2B revenue teams are going. It is a description of where the best of them already are. The gap between 78% and 19% is the distance between having adopted something and having changed something. Closing this gap is the actual work.

Scout is Factors' answer to this gap.

Built on the first-party data your business already has. Watch your pipeline before you ask anything. Closing the loop between signal and action so your team doesn't have to.

Scout for more pipeline; here’s how.

Frequently Asked Questions (FAQs) for never had more data, never being more lost

1. Why is there such a large gap (59%) between AI adoption and revenue impact?

The gap exists because most teams use AI as a smarter search tool for their existing data. You still have to know what to ask and when to ask it. If the underlying manual workflow hasn't changed, the AI can't fix the timing issues that cause deals to slip.

2. Is "dirty data" the main reason B2B marketing fails?

While data accuracy matters, the blog argues that latency (timing) is the bigger killer. Even with perfect data, if it takes three hours and a meeting to realize an account is ready to buy, you’ve likely already lost the lead to a faster competitor.

3. What is the difference between an AI Assistant and an AI Participant?

An Assistant waits for a human to prompt it with a question (e.g., "Summarize this account"). A Participant (like Scout) monitors the data in the background and proactively alerts the team (e.g., "This account just viewed the pricing page and G2, act now").

4. How does Scout specifically solve the "timing" problem?

Scout connects your first-party data sources, CRM, website behavior, and intent signals—and monitors them 24/7. It identifies high-intent patterns overnight and provides a ranked list of actions for reps every morning, eliminating the need for manual research.

5. Do I need a new data stack to use Scout?

No. Scout is designed to sit on top of the first-party data you are already collecting in your CRM, ad platforms, and website. It turns your existing data into a "live" system of action.

See how Factors can 2x your ROI

Boost your LinkedIn ROI in no time using data-driven insights

See Factors in action.

Schedule a personalized demo or sign up to get started for free

LinkedIn Marketing Partner

GDPR & SOC2 Type II

.avif)

.svg)

.avif)

.svg)