LLM Use Cases and Visualization: How Large Language Models Power Marketing AI

Explore practical LLM use cases for B2B marketers. Learn how large language models improve targeting, reporting, content, and pipeline growth.

TL;DR

- Large language models (LLMs) solve real marketing problems like slow reporting, weak lead qualification, fragmented data, and content bottlenecks, not just "AI for the sake of AI."

- The twelve highest-impact llm use cases for marketing teams span campaign summaries, ad copy generation, audience segmentation, attribution narratives, intent detection, and forecasting.

- LLMs become far more powerful when connected to your own revenue data (CRM, ad platforms, web analytics) instead of running as standalone prompt tools.

- The smartest place to start is reporting summaries, content repurposing, or lead scoring, because ROI shows up quickly and implementation is lightweight.

- Hallucinations, privacy risks, and generic outputs are real concerns that require human review and proprietary data grounding.

It’s Monday morning (firstly, noooo😭)... your browser has 27 tabs open, three dashboards loading painfully slowly, and one Slack message that simply says: “Need insights for the board deck by 11.” *cue to loud internal and external screaming*.

You click into campaign data… there are numbers everywhere, CTR is up, pipeline is flat, and website traffic is rising from accounts sales has never heard of. LinkedIn engagement looks strong, but nobody can tell if it came from actual buyers or people who just enjoy liking thought leadership while avoiding work.

Then someone says the sentence every marketing team has now heard at least once:

“Can’t AI just figure this out?”

Fair question. Slightly off-tone, but fair.

Because this is where large language models stopped being a fun toy that writes birthday poems and became something much more interesting for marketers. They can read messy datasets, summarize patterns, turn dashboards into narratives, surface hidden intent signals, generate campaigns faster, and make complex information understandable to humans who do not want to inspect twelve CSV files before coffee.

That second part. Let’s talk about that in the next two sentences. The magic of LLMs is not only generation; it is interpretation and visualization. Taking scattered campaign metrics, CRM notes, ad performance, website journeys, and pipeline movement, then translating all of it into something a marketer can actually act on.

We’ve all watched teams move from drowning in data to finally seeing the story inside it. A weekly report becomes a clear summary with the next steps. A pile of account activity becomes a ranked list of buying signals. A confusing funnel becomes a visual map of where leads disappear and why.

This article is about where LLMs genuinely help marketing teams today (there are no sci-fi promises, nothing about “replace your whole team by Thursday”). I’ve tried to add some real use cases, workflows, and examples of how large language models are powering modern marketing AI, especially when paired with smart visualization and good human judgment.

If your team has more data than clarity, you're in the right place.

What are LLMs and why should marketers care?

Let's start with a simple definition that doesn't require a computer science degree. A large language model is an AI system trained on enormous volumes of text data, designed to understand, generate, and reason about language. When you type a question into ChatGPT, Claude, or Google's Gemini, you're interacting with an LLM. Meta's Llama is another well-known example in the open-source world.

The ‘large’ part is important because… it refers to the scale of parameters (think of these as the model's internal decision points). GPT-4 has hundreds of billions. That scale is what allows these systems to do more than simple pattern matching. They can summarize a fifty-page report, draft an email that sounds like a human wrote it, interpret ambiguous questions, and synthesize information from multiple sources into a coherent answer.

Now, here's why this is different from the marketing automation tools you've used for the past decade. Your old tools could trigger an email when someone downloads a whitepaper. They could segment a list by job title or company size. What they couldn't do is understand context. They couldn't read between the lines of engagement data and tell you why a campaign underperformed, or generate a genuinely tailored message for a specific buying persona at a specific stage of the funnel.

LLMs can do those things because they process intent (not just inputs). They don't just see that a visitor hit your pricing page three times. They can connect that behavior with CRM notes, ad engagement, and content consumption patterns, then produce a plain-English summary of what's happening with that account.

For B2B marketers specifically, this is a meaningful leap. Your world runs on fragmented data spread across a dozen platforms, long sales cycles where context gets lost between handoffs, and a constant pressure to produce more content and better insights with the same headcount. LLMs are purpose-built to handle exactly that kind of complexity, which is why large language model use cases have moved from experimental to essential in most forward-thinking marketing organizations.

Why do LLM use cases matter in B2B marketing?

Most B2B teams don't wake up thinking, "We need artificial intelligence." They wake up thinking, "We need to stop spending four hours building a campaign report that nobody reads past slide three." The appeal of LLMs isn't the technology itself. It's the problems they quietly eliminate.

Let me list the ones I hear most often, because they tend to show up in nearly every marketing org I've worked with or spoken to:

- Too much campaign data, not enough insight

You've got dashboards everywhere, but translating that data into "here's what we should do next" still requires a human sitting down for hours.

- Weak lead qualification

MQLs flood in, but sales complains that half of them aren't real buyers. The scoring model hasn't been updated since 2022, and nobody trusts it.

- Slow content production

You need blog posts, LinkedIn ads, nurture sequences, webinar copy, and sales one-pagers. Your content team is two people, and one of them is also running events.

- Poor marketing-to-sales handoff

By the time a lead reaches sales, the context of how they got there is either missing or buried in Salesforce notes nobody reads.

- Attribution confusion

Everyone argues about which channel "gets credit." Nobody can clearly explain the buyer journey from first touch to closed deal.

- Low personalization at scale

- You know personalization works, but doing it properly for fifty target accounts with different buying committees feels impossible without tripling your team.

These aren’t really one-off use cases… in fact, most of us working in B2B marketing would agree that these are exactly the problems where LLM business use cases deliver the fastest results.

The enterprise adoption numbers reflect this. Generative AI marketing tools have moved from pilot programmes to production deployments across mid-market and enterprise companies at a pace that's genuinely unusual for B2B tech adoption. The reason is straightforward: the ROI shows up in weeks, not quarters. When a marketer can ask a natural-language question and get a reporting summary instead of building a spreadsheet, the time savings are immediate and visible. When a content team can repurpose a single long-form piece into eight distribution-ready assets in an afternoon, the productivity gain is obvious. LLMs aren't solving a theoretical problem. They're solving the specific, frustrating bottlenecks that make marketing teams feel perpetually underwater.

12 high-impact LLM use cases for marketing teams

This is the section you probably scrolled down looking for, and it's the longest one for good reason. These twelve llm marketing use cases represent the most practical, highest-ROI applications I've seen across B2B teams of different sizes and maturity levels. Some are simple to implement today. Others require deeper integration with your data stack. All of them are real, not theoretical.

- Campaign performance summaries

Every marketing team has dashboards. Very few have dashboards that actually tell a story. The gap between "here's a chart showing CTR over time" and "here's what happened and why" is enormous, and it's a gap that LLMs close remarkably well.

Imagine pointing an LLM at your campaign data and getting back something like: "CTR on the enterprise LinkedIn campaign dropped 18% week-over-week, likely due to audience fatigue. The same creative has been running for six weeks without rotation. Meanwhile, the mid-market campaign saw a 12% lift after the new case study ad was introduced." That's not a generic summary. It's the kind of insight that used to require a senior analyst sitting down for an hour with the data. LLMs can produce it in seconds, and they can do it in plain English that your VP can actually act on during a meeting.

The key here is connecting the LLM to your actual campaign data, not just asking ChatGPT to interpret a screenshot. When ai marketing automation platforms integrate LLMs with live data feeds from Google Ads, LinkedIn, and your CRM, the summaries become genuinely actionable.

- Ad copy generation and testing

If you've ever sat in a room trying to brainstorm twelve different LinkedIn ad variants for the same product, you know it starts strong and gets painful by variant number five. LLMs excel at this because they can take a single brief and produce dozens of variations, each tailored to a different persona, funnel stage, or pain point.

The real power isn't just volume, though. It's the ability to systematically vary one element at a time. You can ask for five versions that change only the hook, five that change the CTA, and five that shift the value proposition. That structure makes A/B testing far more rigorous than the "let's try two headlines and see what happens" approach most teams default to. A content strategist still needs to review, edit, and approve the output. But they're starting from twelve solid drafts instead of a blank page, and that changes the velocity of your creative pipeline entirely.

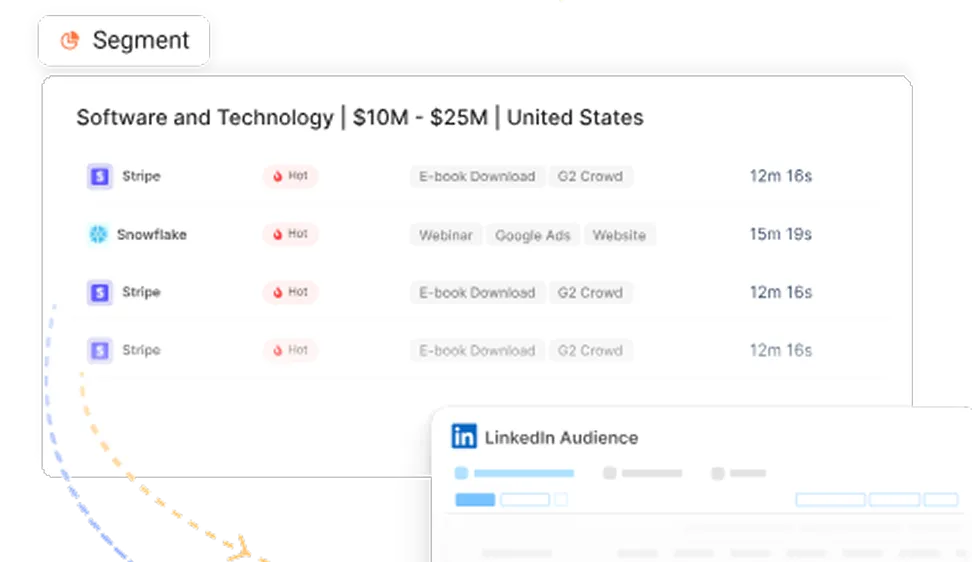

- Audience segmentation

Traditional segmentation relies on firmographic filters: industry, company size, and job title. Those are useful starting points, but they miss the behavioral signals that actually predict buying intent. An LLM connected to your engagement data can group leads by much richer criteria.

For example, instead of "all VPs of Marketing at companies with 500+ employees," an LLM-powered segmentation might surface "accounts where three or more contacts have engaged with pricing content in the past two weeks and also attended a webinar." That's a fundamentally different, and more useful, way to think about your audience. It moves segmentation from static lists to dynamic clusters that reflect what accounts are actually doing, not just what they look like on paper.

- Lead qualification

Lead scoring has been around for years, but let's be honest about how well it works at most companies. The model was built two years ago based on assumptions that may no longer hold, the weights haven't been recalibrated, and sales still ignores half the MQLs because they don't feel like real buyers.

LLMs offer a different approach to qualification. Instead of rigid point-based scores, they can assess intent by reading across multiple signals: web visit patterns, ad engagement, content consumption, CRM activity, even the language used in form fills. A high-intent account isn't just one that hit a point threshold. It's one where the behavior pattern suggests active evaluation, and LLMs are remarkably good at detecting those patterns when given access to the right data. This is one of the LLM examples that tends to surprise teams the most, because the improvement over legacy scoring is so visible.

- Conversational reporting

This is the use case that makes analytics feel like it's finally caught up with how humans actually think. Instead of navigating seven dashboard tabs and three filters to answer a question, you simply ask: "Which campaigns influenced enterprise pipeline last quarter?"

The LLM pulls from your connected data sources, synthesizes the answer, and delivers it in natural language. No pivot tables, no export-to-Excel ritual, no waiting for the analytics team to have bandwidth. The question-and-answer format also surfaces insights you might not have thought to look for. When a team starts asking ad hoc questions, they often discover patterns that pre-built dashboards never would have surfaced, because dashboards only answer questions someone thought to build in advance.

- Content repurposing

A single well-researched blog post contains enough material for a newsletter, three LinkedIn posts, a webinar summary, an email nurture sequence, and a sales one-pager. The problem is that repurposing takes time, and most content teams are too busy creating the next piece to properly distribute the last one.

LLMs make this process nearly instant. You feed in the original blog, specify the output formats, and get back drafts tailored to each channel. The LinkedIn version is shorter and punchier. The email version leads with a pain point. The sales one-pager focuses on competitive differentiation. Each output still needs a human pass for tone, accuracy, and brand consistency, but the heavy lifting of reformatting and rewriting is handled. For teams where content production is a bottleneck (which is nearly all of them), this is one of the fastest paths to visible ROI.

- SEO content planning

If you've ever spent a day doing keyword research, clustering topics, mapping search intent, and drafting content outlines, you know it's valuable work that feels like it takes forever. LLMs compress the entire workflow. They can take a seed keyword, generate semantically related clusters, identify gaps in your current content, assess whether each keyword signals informational or commercial intent, and draft a preliminary outline, all in a single session.

The output isn't perfect. You'll still need a strategist to validate the clusters and an editor to refine the outlines. But the starting point is dramatically better than a blank spreadsheet and a Semrush export. Generative ai marketing tools that combine LLMs with real-time search data are making this even more powerful, because they can ground their recommendations in actual ranking data rather than just language patterns.

- Chatbots and buyer assistants

Chatbots have existed for years, but the old ones were frustrating because they could only follow pre-programmed decision trees. If a visitor asked something outside the script, the bot would shrug (metaphorically) and suggest emailing support. LLM-powered chatbots are a different experience. They can understand nuanced questions, draw from your knowledge base, and respond conversationally.

For B2B websites, this means faster qualification. A visitor lands on your pricing page, asks a specific question about integrations, and gets a genuine answer instead of "please book a demo." The chatbot can assess whether the visitor matches your ICP, route high-intent conversations to sales in real time, and log the entire interaction in your CRM. It's not replacing your SDR team. It's handling the first ninety seconds of every conversation so your team can focus on the ones that matter.

- Sales enablement

Sales reps spend a surprising amount of time doing research before meetings. They're clicking through LinkedIn profiles, scanning CRM notes, reviewing recent engagement history, and trying to piece together a picture of the account. It's necessary work, but it's tedious and inconsistent.

An LLM can generate an account brief in minutes by pulling from CRM data, website activity, ad engagement, and publicly available information. The brief might include key contacts and their roles, recent content interactions, relevant case studies to reference, and potential objections based on the account's industry. The rep walks into the meeting prepared, and the preparation didn't eat two hours of their afternoon. Marketing ai tools that offer this kind of sales enablement bridge the gap between marketing's data and sales' conversations, which is where most revenue teams lose context.

- Attribution narratives

Attribution has always been a numbers problem. Marketing attribution ai dashboards show you percentages and channel breakdowns, but they rarely tell a story. An LLM can take the same underlying attribution data and generate a narrative: "This deal was first influenced by an organic search visit in January. The buying committee expanded after three contacts attended our webinar in March. LinkedIn retargeting kept the account engaged through April, and the closed-won came after a direct outreach by the AE following a pricing page visit."

That narrative is infinitely more useful in a pipeline review than a bar chart showing "40% organic, 30% paid, 30% direct." It helps everyone in the room understand the journey, not just the allocation. And it makes attribution conversations less adversarial, because the focus shifts from "who gets credit" to "what actually happened." Attribution debates sometimes resemble group projects where everyone claims credit for the final result. Narratives bring the receipts.

- Intent detection

Anonymous website visitors are one of the most underutilized data sources in B2B marketing. You know someone from a target account visited your comparison page, your pricing page, and your integrations docs, all in one session. That's a high-intent signal, but most teams can't act on it quickly enough.

LLMs can summarize anonymous visitor behavior in real time. Instead of a raw event log showing page URLs and timestamps, you get a concise summary: "An unknown visitor from Acme Corp visited four product pages and the pricing calculator in a single session, suggesting active evaluation." That summary can trigger an alert to your SDR team, add the account to a priority ABM list, or kick off a personalized ad sequence. The raw data was always there. The LLM just makes it legible and actionable at the speed your revenue team needs.

- Forecasting inputs

Pipeline forecasting in most B2B companies is a blend of CRM data, gut feeling, and whatever the sales manager heard on the last call. LLMs can improve the inputs to that process by surfacing patterns humans tend to miss. They can read across CRM notes, campaign engagement trends, and pipeline velocity data to flag accounts that are accelerating, stalling, or at risk.

They won't replace your forecasting model, but they'll make it smarter. For instance, an LLM might notice that accounts in a specific industry segment tend to close faster after attending a particular webinar, or that deals with more than three engaged contacts move through the pipeline at twice the rate. Those insights get buried in spreadsheets. An LLM surfaces them in plain language, which means they actually get used.

Visualizing how LLMs fit into the marketing funnel

One thing that makes LLM use cases hard to evaluate is that they don't sit neatly in one place. They're not a "top-of-funnel tool" or a "bottom-of-funnel tool." They stretch across the entire journey, and visualizing that spread helps you decide where to deploy them first.

Here's how LLM capabilities map to each stage of a typical B2B marketing funnel:

- ToFu (top of funnel)

At the awareness stage, the goal is reach and relevance. LLMs contribute here through:

- Topic research and ideation. Generating content ideas based on keyword clusters, competitor gaps, and audience questions.

- SEO clustering. Grouping related keywords by intent and suggesting content hierarchies.

- Ad copy ideation. Producing multiple creative variants for awareness campaigns across LinkedIn, Google, and programmatic channels.

- Awareness content generation. Drafting blog posts, social content, and thought leadership pieces that attract the right audience.

- MoFu (middle of funnel)

Once someone's aware of you, the challenge becomes qualification and personalization. This is where LLMs start pulling from your first-party data:

- Lead scoring. Assessing buying intent from engagement patterns rather than static firmographic rules.

- Personalization. Generating tailored messaging for different personas and account segments.

- Nurture email sequencing. Drafting email sequences that adapt to where a contact is in their journey.

- Buyer intent analysis. Interpreting behavioral signals to identify accounts that are moving from casual interest to active evaluation.

- BoFu (bottom of funnel)

At the decision stage, speed and precision matter most. LLMs help revenue teams prioritise and act:

- Opportunity prioritisation. Flagging which deals are most likely to close based on engagement and pipeline data.

- Pipeline summaries. Generating plain-English overviews of pipeline health for leadership reviews.

- Attribution insights. Building narrative-style attribution stories that explain how deals came together.

- Expansion recommendations. Identifying cross-sell and upsell opportunities within existing accounts based on product usage and engagement data.

Traditional funnel vs LLM-powered funnel

The takeaway is… LLMs don't replace any single tool in your stack. They sit on top of your existing systems and make each stage faster, smarter, and more connected. The biggest gains come when the same LLM layer has access to data across all three stages, because that's when it can connect a ToFu blog visit to a MoFu webinar registration to a BoFu pipeline opportunity and tell you the full story.

LLM use cases for ABM and revenue teams

Account-based marketing is where LLMs go from "nice to have" to "how did we do this before?" The reason is simple: ABM requires deep account-level intelligence, and generating that intelligence manually is brutally time-consuming. Account-based marketing AI that leverages LLMs changes the economics of ABM entirely.

Here's what that looks like across the core ABM workflows:

- Identifying engaged accounts

Instead of manually reviewing dashboards to spot which target accounts are showing engagement, an LLM can continuously monitor your data and surface accounts where multiple buying signals are converging. Think of it as a filter that's always running, always watching for the combination of ad clicks, website visits, content downloads, and email opens that indicate a real evaluation is underway.

- Summarising account journeys

Every ABM strategist wants to understand the story of an account: when did they first engage, who's involved, what content have they consumed, where are they in the buying process? LLMs can pull that story together from disparate data sources and present it as a clear, readable narrative. No more stitching together Salesforce records, Google Analytics sessions, and LinkedIn campaign data by hand.

- Building personalized outreach prompts

Once you understand an account's journey, you need to act on it. LLMs can generate personalized outreach drafts for each account, referencing the specific content they've engaged with, the pain points their industry faces, and the stage of the buying cycle they appear to be in. The SDR still customizes and sends, but they're starting from a tailored draft instead of a blank email.

- Detecting buying committee signals

In enterprise B2B, you're rarely selling to one person. You're selling to a committee of five, eight, or twelve people. LLMs can flag when multiple contacts from the same account are engaging simultaneously, which is one of the strongest buying signals in ABM. If the VP of Marketing downloaded your ROI guide, the Director of Ops attended a webinar, and someone from IT visited your integrations page, that's a committee-level signal that deserves immediate attention.

- Surfacing hidden opportunities

Some of the best pipeline sits in accounts you're not actively targeting. LLMs can scan your engagement data and identify accounts that match your ICP and are exhibiting buying behavior, even if they're not on your named account list. The query might look something like: "Show me Fortune 500 accounts with ad engagement, repeat visits, and open opportunities." That's the kind of cross-data synthesis that would take a human analyst half a day. An LLM delivers it in seconds.

What makes these LLMs for b2b marketing use cases particularly powerful for revenue teams (not just marketing) is that the insights flow downstream. The account summary that marketing generates feeds directly into the SDR's outreach. The buying committee signal triggers a coordinated play between marketing and sales. The pipeline data that the LLM surfaces gets discussed in the weekly revenue review. When LLMs sit at the intersection of marketing and sales data, they become a connective layer that most organizations desperately need.

How Factors.ai uses LLM intelligence

Everything we've discussed so far has an important catch: LLMs are only as useful as the data they can access. A standalone LLM like ChatGPT is brilliant at general language tasks, but it doesn't know anything about your pipeline, your campaigns, or your accounts. The moment you need it to answer a question like "which campaigns drove enterprise pipeline last quarter," it's completely blind.

This is where Factors.ai takes a different approach. Instead of starting with a general-purpose prompt interface, Factors connects LLM intelligence directly to your first-party revenue data. Your CRM, your ad platforms, your web analytics, your account engagement signals are all part of the foundation the LLM reasons from.

Here's what that enables in practical terms:

- Ask questions across your entire GTM stack. You can query campaigns, CRM records, and website activity in natural language, and get a synthesized answer that draws from all of them.

- Summarise account journeys automatically. Instead of clicking through five tools to understand what happened with a specific account, Factors generates a timeline-style summary of every meaningful interaction.

- Detect high-intent accounts in real time. The system watches for converging signals (repeat visits, ad engagement, content consumption, CRM activity) and flags accounts that warrant immediate attention.

- Recommend audience expansion. Based on the characteristics of accounts already in your pipeline, Factors can suggest similar accounts you should be targeting.

- Explain ad performance in context. Rather than just showing you LinkedIn or Google Ads metrics, it connects performance data to downstream pipeline outcomes. You see which campaigns drove meetings, not just clicks.

- Turn noisy data into action. The core value is compression. Hundreds of data points about an account get distilled into a clear, actionable summary that your team can use immediately.

Most AI in the market starts with prompts. Factors starts with your actual revenue data, which means the LLM's outputs are grounded in your specific business context, not generic training data. That distinction is what separates interesting from useful. For marketing teams evaluating marketing AI tools, the question shouldn't be "does it use AI?" but rather "does it connect to my data and workflows?"

Risks, limits, and governance you can't ignore

I'd be doing you a disservice if I painted LLMs as an uncomplicated win. They're powerful, but they come with real risks that every marketing team needs to understand before scaling adoption. Ignoring these doesn't make you innovative. It makes you reckless.

- Hallucinations are the biggest trust risk

LLMs can generate confident, well-structured text that is completely wrong. They don't "know" facts the way a database does. They predict likely language sequences, and sometimes that prediction leads to fabricated statistics, invented sources, or subtly inaccurate claims. In a marketing context, this could mean an account brief that references a case study you never published, or a campaign summary that misattributes performance data. Every LLM output that touches your buyers, your leadership team, or your published content needs human review. No exceptions.

- Privacy concerns are real and evolving

When you feed customer data, CRM records, or engagement data into an LLM, you need to understand where that data goes and how it's processed. Public LLM APIs may use your inputs for model training unless you've explicitly opted out. For B2B companies handling enterprise client data, this isn't a hypothetical risk. It's a compliance issue that your legal and security teams need to weigh in on before you start piping account data into any AI tool.

- Over-automation creates a different kind of problem

The temptation to automate everything is strong, especially when the technology is impressive. But marketing that feels fully automated also feels generic, and your buyers can tell. If every nurture email, every ad variant, and every sales outreach is AI-generated without meaningful human input, your brand starts to sound like everyone else's brand. The goal is to automate the tedious parts and preserve human judgment for the strategic ones.

- Generic outputs are the default without grounding

A standalone public LLM draws from general training data. It doesn't know your product positioning, your competitive landscape, or your ideal customer profile. Without grounding in your proprietary data, its outputs will be plausible but generic. This is why connected systems (LLMs integrated with your CRM, ad platforms, and analytics tools) dramatically outperform standalone chat interfaces for business applications. The model needs your context to produce your answers.

- Human review is NOT optional, and needs to be part of the structure

The most effective LLM workflows I've seen treat AI-generated content as a first draft, never as a final product. A human reviews every summary, every outreach draft, every attribution narrative. That review layer is where quality, accuracy, and brand voice get enforced. Teams that skip it eventually publish something embarrassing, and the cleanup costs more than the time they saved.

No attribution model answers every question perfectly, and anyone who tells you otherwise is probably selling one. The same principle applies to LLMs. They're a tool, not an oracle. The teams that deploy them well are the ones that understand where the model's confidence is justified and where it needs a human gut check.

How do you choose the right LLM use case to start with?

With twelve use cases on the table, it's tempting to try all of them at once. Resist that temptation. The fastest path to real impact is picking one or two use cases, proving value, and then expanding. The question is how to choose which ones come first.

Here's a simple scorecard framework I'd recommend:

Based on this framework, I'd recommend most B2B teams start with one of these four use cases:

- Reporting summaries

The data already exists in your dashboards. The implementation is lightweight. The time savings are immediately obvious to leadership. And if the summary is slightly off, a human catches it before it reaches anyone external.

- Lead scoring and qualification

If you have decent engagement data and a CRM with pipeline records, an LLM can dramatically improve how you identify high-intent accounts. The ROI shows up in conversion rates and sales feedback within a quarter.

- Content repurposing

You've already written the source material. The LLM just reformats it. The risk is low because every output gets an editorial review anyway, and the productivity gain is massive for small content teams.

- Sales briefs

This one tends to win over skeptics quickly because the sales team sees immediate value. Reps who previously spent thirty minutes preparing for a call now get a briefing document generated in minutes. It's the kind of cross-functional win that builds internal support for broader LLM adoption.

The use cases that should come later, not because they're less valuable, but because they require more integration and governance, include ad copy generation at scale (needs brand guidelines baked in), chatbots (need knowledge base integration and QA testing), and forecasting inputs (need clean, well-structured pipeline data). Start where the data is ready and the stakes are manageable. Scale from there.

Where to, next? Where are LLMs in marketing AI heading next?

The current generation of LLM use cases is largely reactive. You ask a question… you get an answer. You feed in data, you get a summary. You provide a brief… you get a draft. The next wave is about moving from reactive to autonomous, and it's closer than most marketers realize.

Agentic workflows are the most significant shift on the horizon. Instead of a human prompting an LLM for each task, an AI agent will execute multi-step workflows on its own. Imagine telling a system: "Monitor our LinkedIn campaigns, flag any that drop below target CTR, generate three replacement ad variants, and queue them for review." The agent handles the monitoring, the analysis, the creative generation, and the routing. The human reviews and approves. That's not science fiction; early versions are already in production at some companies.

- Autonomous campaign operations take this further

Think of budget reallocation that happens in real time based on pipeline impact, not just click metrics. Or nurture sequences that dynamically adjust their messaging based on an account's evolving engagement pattern. The human sets the strategy and the guardrails. The system handles the execution within those bounds.

- Multimodal analysis is another frontier

Current marketing LLMs mostly work with text. The next generation will process text, images, video, and call transcripts together. Your AI will be able to watch a recorded sales call, summarize the key objections, cross-reference them with the account's marketing engagement, and suggest a follow-up strategy. That's a level of synthesis that's impossible to do manually at scale.

- Predictive GTM copilots represent the endgame for many of these trends

Instead of separate tools for analytics, content, ABM, and forecasting, you'll have a unified intelligence layer that connects all of them. It won't just answer questions. It'll proactively surface recommendations: "Three enterprise accounts are showing accelerated engagement this week. Here's a recommended play for each one." AI that acts, not just answers, is the trajectory that every major platform is building towards.

The marketing teams that will benefit most from this next wave aren't the ones waiting for it to arrive. They're the ones building the data infrastructure, governance practices, and internal fluency with LLMs now, so that when agentic tools become production-ready, they can adopt them without starting from scratch.

In a nutshell…

We've covered a lot of ground, so here's a short summary of what I’d love for you to remember from this blog.

LLMs solve specific, expensive problems that B2B marketing teams face every day: slow reporting, weak lead qualification, content bottlenecks, fragmented data, and the chronic gap between what marketing knows and what sales can act on. The twelve use cases we walked through, from campaign summaries and ad copy generation to attribution narratives and forecasting inputs, aren't theoretical. They're in production at real companies, delivering measurable time savings and better decisions.

The funnel mapping exercise shows that LLMs aren't a single-stage tool. They add value at every point in the buyer's journey, but they get dramatically more powerful when they can connect data across stages. An LLM that can see a ToFu blog visit, a MoFu webinar registration, and a BoFu pricing page session, all for the same account, tells a story that no single dashboard ever could.

That’s where the real shift happens. Marketing teams have never lacked data. They’ve lacked context, speed, and the ability to turn scattered signals into clear next moves. LLMs help close that gap by acting less like a chatbot and more like an always-on analyst, strategist, and translator sitting inside your stack.

They can explain performance, spot patterns, surface opportunities, and make complex data easier to understand through summaries, visualisations, and recommendations. Suddenly, reporting becomes useful. Lead handoffs become sharper. Content becomes faster to produce. Decision-making becomes less political and more evidence-based.

But the companies that win with LLMs won’t be the ones using them for novelty. They’ll be the ones using them with clean data, smart workflows, clear guardrails, and human judgment still firmly in the driver’s seat.

So if you're wondering where to start, start small, start practical, and start where the pain is most expensive. Because the future of marketing AI probably won’t arrive as one dramatic revolution. It’ll arrive as dozens of frustrating tasks quietly disappearing from your week.

FAQs for LLM use-cases and visualizations

Q1. How do LLMs differ from traditional marketing automation?

Traditional automation is rule-based (e.g., "If lead downloads X, send email Y"). It follows strict "if/then" logic. LLMs are intent-based; they can understand context, summarize unstructured data from CRM notes, and generate creative variations based on a single prompt. LLMs don't just move data, they interpret it.

Q2. Do I need a data scientist to use LLMs in my marketing team?

Not necessarily. Most modern Marketing AI tools and platforms like Factors.ai have LLM capabilities built-in via "Conversational Reporting" or "Account Summaries." You interact with the data using natural language, making the "data science" part invisible to the end user.

Q3. What is the highest ROI use case to start with?

The quickest win is typically Reporting Summaries and Content Repurposing. Summarizing campaign performance in plain English saves hours of analyst time, and repurposing one webinar into ten social posts scales your content output instantly without increasing headcount.

Q4. Can LLMs replace my SEO or Content team?

No. LLMs are "first-draft" machines. While they excel at brainstorming keyword clusters and drafting outlines, they lack the strategic empathy and proprietary insight required for high-performing B2B content. A human must always review for brand voice, factual accuracy (avoiding hallucinations), and competitive positioning.

Q5. How do LLMs map across the marketing funnel?

- ToFu (Top of Funnel): Ideation, SEO clustering, and multi-variant ad copy generation.

- MoFu (Middle of Funnel): Intent-based lead scoring and personalized nurture sequencing.

- BoFu (Bottom of Funnel): Opportunity prioritization, account-based briefs for sales, and attribution narratives.

Q6. What are "hallucinations," and how do I avoid them?

Hallucinations occur when an LLM confidently states a fact that is incorrect. To avoid this in marketing, you must use "Grounding." This means connecting the LLM to your specific first-party data (CRM, Web Analytics) so it only reasons based on your actual numbers rather than general internet training data.

Q7. Is my company’s data safe when using LLMs?

This depends on the tool. Public versions of tools like ChatGPT may use your data for training. However, enterprise-grade tools (and those using private API instances) ensure your data is siloed and not used to train the public model. Always check for SOC2 compliance and data privacy agreements.

Q8. How do LLMs help with Account-Based Marketing (ABM)?

LLMs excel at Account Journey Summarization. Instead of a rep clicking through 20 Salesforce records to understand an account’s history, an LLM can synthesize that data into a 3-paragraph "brief" that highlights which stakeholders are active and what they care about.

See how Factors can 2x your ROI

Boost your LinkedIn ROI in no time using data-driven insights

See Factors in action.

Schedule a personalized demo or sign up to get started for free

LinkedIn Marketing Partner

GDPR & SOC2 Type II

.avif)

.svg)

.avif)

.svg)