Multi-touch attribution vs. media mix modeling: Which one is actually telling you the truth?

Compare Attribution Modeling vs. MMM. Learn which measurement move is right for your B2B SaaS, how to track the dark funnel, and why account-level data is the key to ROI.

TL;DR

- Multi-touch attribution (MTA) works for granular and user-level tracking, and would be the best for short-term tactical optimization.

- Media Mix Modeling (MMM)works for macro and aggregate statistical modeling, and would be the best for long-term strategic planning and privacy-safe budget allocation.

- Standard models fail B2B due to long sales cycles and multi-person buying committees; Account-Based Attribution is the necessary bridge.

- Use multi-touch attribution (MTA) for daily execution and MMM for board-level reporting and ‘dark funnel’ estimation.

Picture the quarterly budget review. The CFO slides you a spreadsheet and asks, very calmly, which half of the $400,000 you spent on ads actually worked.

You glance at your attribution dashboard, which confidently tells you LinkedIn Ads drove 38% of pipeline. Your Google Ads dashboard, equally confident, says Google drove 51%. And your CMO, somewhere in the middle of all this, casually mentions the Media Mix Model report the agency ran last quarter, which apparently found that brand sponsorships drove 22% of revenue.

That's three sources, three different answers, and a room full of people waiting for you to reconcile them in real time. So you do what any seasoned marketer does: you take a slow sip of coffee, nod like you're processing a profound insight, and make a quiet mental note to update your LinkedIn profile later that evening.

If this sounds at all familiar, you've experienced the core tension sitting at the heart of modern marketing measurement: attribution modeling vs. media mix modeling. Both claim to tell you where your ROI is coming from, both sound methodologically rigorous when someone explains them in a slide deck, and both, somehow, keep producing different numbers every time you actually need them to agree.

So which one is right? And more importantly, which one should you actually be using? Let's break it all the way down.

First, why is measurement SO broken right now?

Before we dive into the two models, it's worth understanding why this debate even exists.

For a long time, marketers could lean heavily on last-click attribution. Someone clicks your ad, fills out a form, boom, the ad gets credit. Simple, clean, and deeply misleading.

Then multi-touch attribution came along and said, "Hey, actually, the five other things that happened before that final click mattered too." That was progress.

But then we hit the privacy wall.

- Third-party cookies started dying.

- iOS updates broke click tracking.

- Dark social (Slack, email, DMs, word of mouth) became a bigger driver of B2B pipeline than anyone wanted to admit.

- Cross-device journeys became near-impossible to stitch together cleanly.

Suddenly, attribution models, which depend on individual-level tracking, started looking patchier than a college Wi-Fi network.

And that's when people started taking Media Mix Modeling seriously again.

MMM has actually been around since the 1960s. CPG companies like P&G and Unilever were using regression models to understand TV vs. print vs. radio spend before most of us were alive. It just never made sense for B2B, which had smaller datasets, longer cycles, and less consistent spend patterns.

Now it's back, rebranded as a ‘privacy-safe measurement solution,’ and everyone's talking about it like it's brand new.

So let's actually understand what both of these are.

How to reconcile multi-touch attribution and media mix models? (The Golden Source Logic)

When your multi-touch attribution dashboard says LinkedIn drove $1M and your media mix model says it drove $2.5M, you aren't seeing an error; you're seeing Incremental Delta. Use this framework to reconcile the two:

- The Baseline (MTA): Use your Multi-Touch Attribution as your ‘Floor.’ This represents your Captured Demand, the revenue you can prove with 100% certainty through digital breadcrumbs.

- The Incrementality Layer (MMM): The gap between your MTA and MMM is your Latent Demand. This represents the "Halo Effect" of your brand spend, word-of-mouth, and dark social that warmed up the account before they ever clicked an ad.

- The Decision Rule: * Optimize Ad Creative and Keywords based on MTA (Granular).

- Set Annual Budget Ceilings based on MMM (Strategic).

What is attribution modeling?

Attribution modeling is the practice of assigning credit to the marketing touchpoints that influenced a conversion.

Every time a prospect interacts with your brand, clicks an ad, reads a blog, opens an email, attends a webinar, visits your pricing page, those events get logged. An attribution model decides how much credit each of those touchpoints gets for the eventual outcome (a demo booked, a deal closed, pipeline generated).

The most common attribution models

- Last-touch attribution gives 100% of the credit to the final touchpoint before conversion. It's easy to implement and wildly inaccurate for anything with a complex buying journey. For B2B SaaS with 3-6 month sales cycles, this is the equivalent of giving all the credit for a win to the person who showed up for the final handshake.

- First-touch attribution gives all the credit to the very first interaction. Great for understanding awareness, terrible for understanding what actually closed the deal.

- Linear attribution spreads the credit equally across every touchpoint. It's fair-ish, but it treats a 2-second ad view the same as a 45-minute product demo. That's not quite right.

- Time-decay attribution gives more credit to touchpoints closer to the conversion. The logic is that recent interactions matter more. This makes sense for short buying cycles. For enterprise B2B, it tends to massively undervalue awareness spend.

- U-shaped (position-based) attribution gives 40% credit to the first touch, 40% to the lead-creation touch, and splits the remaining 20% across everything in between. Better, but still not perfect.

- Data-driven attribution uses machine learning to assign credit based on your actual historical data. It looks at paths that converted vs. paths that didn't and figures out which touchpoints actually made a difference. This is the most accurate, but it needs enough volume to work.

- Multi-touch attribution (MTA) is often used as an umbrella term for any model that credits multiple touchpoints rather than just one. When B2B marketers talk about attribution, this is usually what they mean.

What is attribution actually good at?

Attribution models shine when you need to understand:

- Which specific campaigns or ad sets are driving pipeline

- Which channels are influencing buyers at which stage of the funnel

- What the journey looks like for deals that actually close vs. those that don't

- How to optimize spend at the campaign level in near-real-time

Attribution is granular. It's person-level (or account-level, which matters a lot in B2B). It connects marketing activity to actual CRM outcomes when done right.

When you're trying to figure out "should I double down on LinkedIn retargeting this quarter or shift budget to webinars?" attribution is your answer.

What does attribution struggle with?

Here's where it gets honest.

Attribution depends on trackable touchpoints. If a buyer saw your CEO on a podcast, read three LinkedIn posts from your team, and heard your product mentioned in a customer Slack community before they ever visited your site, none of that shows up in your attribution model. The demo request looks like it came out of nowhere, or, worse, is credited to the retargeting ad they clicked two days before they were already going to book.

Attribution also struggles with:

- TV, billboards, out-of-home, and brand campaigns (non-click-based channels, obviously)

- Cross-device journeys where the cookie trail breaks

- Long buying cycles where impressions influence decisions months before conversion

- Privacy constraints limiting individual tracking

It's excellent data, but it's also incomplete data.

What is media mix modeling?

Media Mix Modeling (MMM), sometimes called Marketing Mix Modeling, is a statistical technique that uses historical data to estimate the contribution of different marketing channels to business outcomes.

Instead of tracking individuals, it looks at aggregate patterns over time.

The basic idea: if you spent more on Google Ads in Q3, and revenue went up in Q3, MMM will try to quantify how much of that revenue lift was caused by Google Ads versus seasonal trends, pricing changes, sales team activity, competitor movements, and every other variable that affects your business.

It does this through regression analysis. Essentially, it's a model that says ‘given everything we know about what changed over this time period, here's our best estimate of what each marketing lever contributed.’

What goes into a media mix model?

A standard Media Mix Model pulls in:

- Marketing spend data by channel (Google, LinkedIn, Meta, TV, events, etc.)

- Revenue or pipeline data over the same period

External variables like seasonality, economic conditions, or competitor activity - Internal variables like pricing changes, product launches, or sales headcount

The model runs regressions across all of this to produce contribution curves for each channel, showing not just whether a channel contributed to revenue, but also whether you're currently under- or over-investing in it relative to its point of diminishing returns.

That last part is genuinely useful. Knowing that your LinkedIn spend is past its saturation point while your email nurture is under-invested is the kind of insight that changes budget conversations.

What is media mix modeling actually good at?

Media Mix Modeling is built for:

- Long-term budget planning (quarterly or annual)

- Understanding the contribution of channels that aren't trackable at the individual level (brand, events, offline)

- Separating the signal of your marketing from other business variables (seasonality, sales team size, product changes)

- Presenting a defensible, privacy-safe measurement story to your CFO or board

- Quantifying the halo effect of brand investment on performance channels

It's also better for understanding saturation, the point at which spending more on a channel stops generating proportional returns. Attribution models don't capture this well because they measure what happened, not what would happen if you spent more or less.

What does the media mix model struggle with?

MMM is expensive, slow, and requires a lot of historical data to produce reliable outputs. A proper MMM typically needs:

- At least 18-24 months of consistent spend data across channels

- Enough variation in spend over that period for the model to detect relationships

- A data science team or a specialized vendor to build and maintain it

For most early- to mid-stage B2B SaaS companies, that data doesn't exist yet, or the investment doesn't make sense relative to the total budget being measured.

MMM also doesn't tell you what to do tomorrow. It tells you what worked over the last six to eighteen months. By the time you have results, the market has shifted, you've changed your ICP, or your competitor has launched a new product.

And critically: Media mix modeling can't show you account-level behavior. It can tell you that LinkedIn contributed 27% to last year's revenue. It cannot tell you which accounts engaged with your LinkedIn ads, or what content they saw before they converted. That's not what it's designed for.

What are the core differences between Media Mix Modeling and Attribution Modeling?

Let's be direct about how these two approaches actually differ.

Here’s the TL;DR version: attribution tells you what's happening at the ground level. MMM tells you what's been happening at the sky level. Both are describing the same forest, just from very different altitudes.

Multi-Touch Attribution vs Marketing Mix Modeling

You'll often hear the debate framed specifically as multi-touch attribution vs marketing mix modeling, and it's worth being precise here.

Multi-touch attribution is a specific class of attribution models that give credit to more than one touchpoint in a conversion path. It's distinct from simpler models, such as last-click.

The reason this comparison gets its own framing is that MTA and MMM both try to answer the same core question (what's driving my results?) but from fundamentally opposite methodological directions.

Multi-touch attribution builds up: it starts with individual user events and aggregates them into channel-level credit.

Media mix model builds down: it starts from aggregate business outcomes and disaggregates to channel-level contribution.

Because they work from opposite directions, they often produce different answers, sometimes wildly different. This is not a bug. It's because they're measuring different things. MTA captures trackable, direct-response-driven activity. MMM captures the total contribution including the stuff that never produced a click.

For a B2B SaaS company running both a performance program and a brand/content program, the gap between MTA and MMM results is often the size of your brand investment. Your attribution model is essentially unable to see it. Your MMM is trying to estimate it.

Why do both models break in B2B specifically?

Here's something that doesn't get said enough: both of these models were largely designed for B2C, and they need significant adaptation to work properly in a B2B context.

- The multi-stakeholder problem

In B2B, a single ‘conversion’ involves multiple people. A $100K SaaS deal might have a champion, an economic buyer, an IT approver, and three end users who each had touchpoints with your marketing over six months.

Standard attribution models track at the user level. If your champion clicked a Google ad and your economic buyer found you through LinkedIn, your model might credit Google and miss LinkedIn entirely, because it's treating them as separate journeys rather than one account-level decision.

Multi-touch attribution that operates at the account level solves this. But most out-of-the-box attribution setups don't work this way.

- The long sales cycle problem

Most attribution models are optimized for conversion windows of days or weeks. B2B deals can take 6-12 months to close. That means an impression from a LinkedIn ad in January that genuinely influenced a deal closed in September often falls outside the attribution lookback window entirely.

The media mix model has an advantage here because it looks at longer time windows by design. But most media mix model setups for B2B aren't granular enough to isolate account-specific patterns.

- The dark funnel problem

Peer reviews on G2, recommendations in customer Slack communities, your CMO's LinkedIn posts, your sales team's thought leadership, and that mention in a Substack newsletter with 8,000 subscribers.

NONE of that is trackable. All of it influences buying decisions. Neither attribution nor MMM captures it perfectly, but at least MMM won't confidently mis-attribute it to the last retargeting ad.

- The pipeline vs. revenue problem

Most attribution models are set up to track MQL generation or demo bookings. But in B2B, those are leading indicators, not outcomes.

What you actually care about is revenue. Or at minimum, pipeline. An attribution model that tells you LinkedIn drove 60% of demo bookings is useful. An attribution model that tells you LinkedIn influenced 40% of closed-won revenue is a completely different (and much more valuable) thing.

This is a setup problem more than a model problem, but it's worth saying out loud: if your attribution model isn't connected to your CRM and tracking actual deal outcomes, you're optimizing for the wrong metric.

When to use attribution, when to use mix media modeling, and when to use both

The best framework here isn't ‘which one is better.’ It's knowing which question you're actually trying to answer.

- Use attribution when you need to:

- Optimize a campaign that's running right now

- Understand which ad creative, targeting segment, or channel is working this quarter

- Connect specific marketing activities to specific pipeline in your CRM

- Make decisions about where to shift budget within a quarter

- Present account-level engagement data to sales

- Use media mix modeling when you need to:

- Justify or re-allocate your annual marketing budget

- Understand the contribution of brand/awareness investment over time

- Model what happens to revenue if you cut spend in a particular channel

- Have a measurement approach that works despite cookieless tracking

- Explain marketing ROI at the board level without it looking like you cherry-picked your dashboard

- Use both when you need to:

- Build a complete measurement stack that covers both short-term optimization and long-term planning

- Triangulate between data sources to build confidence in your numbers

- Handle a channel mix that includes both trackable performance channels and non-trackable brand/events spend

The honest truth is that for most B2B SaaS companies at the $10M-$100M ARR stage, starting with solid multi-touch attribution (especially account-level MTA) gives you more immediate ROI than commissioning an MMM project. MMM makes more sense as your budgets scale and your channel mix diversifies beyond purely performance marketing.

But if you're already running $5M+ in annual marketing spend across multiple channels including brand, events, and paid social, MMM is probably worth the investment. The attribution model alone is leaving a meaningful portion of your story invisible.

Why is media mix modeling having a ✨renaissance✨?

It would be dishonest to write this blog without acknowledging that a big part of MMM's recent resurgence isn't about its methodological superiority. It's about what's happening to attribution's data infrastructure.

Google has been deprecating third-party cookies (for real this time). Apple's App Tracking Transparency has reduced measurable attribution windows. GDPR and CCPA create constraints on how user-level data can be collected and used.

Attribution isn't going away, but its data quality is degrading in environments where it relies heavily on cookies and individual tracking. Companies that built their entire measurement strategy around last-click or even multi-touch attribution are starting to see gaps.

MMM doesn't care about cookies. It works from aggregate data that you already own: your spend records, your revenue data, your pipeline reports. It's inherently privacy-safe, which is becoming a real advantage.

How does Factors.ai approach attribution for B2B?

Since we're talking about this from a B2B lens, it's worth being specific about what good attribution actually looks like in practice.

Most attribution tools are built for B2C performance marketing. They track individual user sessions, tie conversions to click IDs, and report at the channel level. That's useful if you're selling software subscriptions that convert in a single session.

For B2B SaaS, where the buying journey is six months long, involves six people, and includes three channels that don't show up in any click tracker, you need something different.

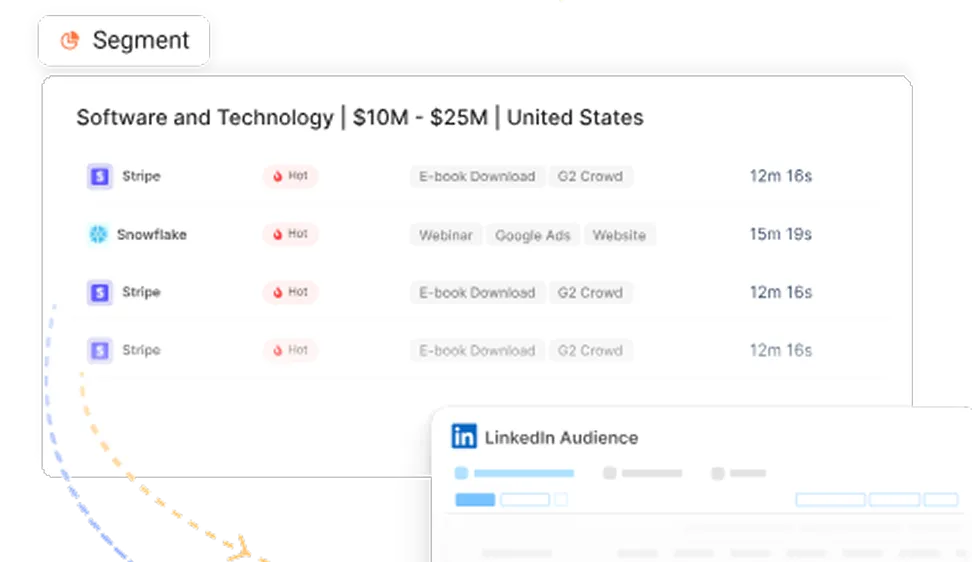

Factors.ai is built to handle account-level attribution specifically. Rather than tracking isolated user journeys, it connects touchpoints across everyone at a given company. So if the VP of Sales saw your LinkedIn ad, the Marketing Manager visited your blog twice, and the CEO watched a product video, those all get attributed to the same account journey.

This matters because it's how B2B deals actually work.

Factors also connects ad engagement directly to CRM pipeline stages, which means you can see not just which channels drive MQLs, but which channels influence deals that actually close. That's the difference between a dashboard that looks good and a report your CFO actually believes.

Features like LinkedIn AdPilot and Google AdPilot inside Factors are built for this, optimizing ad spend not toward clicks or impressions, but toward pipeline-qualified accounts. Frequency Pacing ensures you're not burning budget by hammering the same accounts repeatedly. Cross-Channel Attribution gives you the full picture across paid, organic, and direct.

None of this replaces the strategic value of a proper MMM for long-term planning. But for the quarterly optimization decisions most B2B marketing teams are actually making on a daily basis, account-level multi-touch attribution is more immediately useful.

A practical framework for choosing your measurement strategy

If you're trying to figure out where to start, here's a simple way to think about it.

Stage 1: Get the basics right (most teams are here)

Focus on getting attribution working properly before worrying about MMM.

- Implement account-level tracking across your website

- Connect your ad platforms to your CRM so you can see pipeline influence, not just form fills

- Pick an attribution model that fits your sales cycle length (time-decay or data-driven if you have the volume)

- Set up regular pipeline influence reports that your sales team can actually use

Stage 2: Expand to multi-touch attribution

- Move beyond last-click to a model that credits the full buying committee journey

- Make sure your attribution covers all trackable channels: paid, organic, direct, email, and product

- Start building a view of channel contribution to closed-won revenue, not just MQLs

Stage 3: Layer in MMM for strategic planning

- Once you have consistent spend data across channels for 18-24 months, MMM becomes viable

- Use it for annual budget allocation, not day-to-day optimization

- Don't expect MMM and attribution to match up perfectly, use the gap between them as a data point, not a problem

Stage 4: Build a unified measurement philosophy

- Use attribution for in-flight optimization, MMM for annual planning

- Triangulate between both when making major budget decisions

- Add incrementality testing (holdout experiments) as a third data source to pressure-test both models

- Build dashboards that show your CMO and CFO the measurement layer most relevant to each conversation

Common mistakes to avoid when choosing your attribution model

- Treating one channel as the hero because your attribution model says so.

Attribution models can only see what they can track. If organic, dark social, and brand all contributed and only paid search is trackable, paid search will look like a genius. Trust the data, but know its limits.

- Running a mixed media model without enough data.

If you've only been spending consistently across channels for 8 months, an MMM will produce outputs that look rigorous but are statistically shaky. More months, more variation in spend, more reliable model.

- Using attribution to justify brand cuts.

Brand campaigns rarely produce direct-trackable clicks. If you cut brand spend because it doesn't show up in attribution, you'll probably see performance channels degrade 6-12 months later as brand awareness thins out. MMM helps you see this relationship. Attribution doesn't.

- Picking the attribution model that tells the best story, not the most accurate one.

Different models produce wildly different credit distributions. The temptation is to pick the one that makes your favorite channel look good. The right move is to pick the one that most accurately reflects how your buyers actually make decisions.

- Confusing MMM with attribution because they're both ‘measurement.’

They answer different questions. Combining insights from both is smart. Conflating them in the same conversation is a recipe for confusion.

In a nutshell

Attribution modeling and media mix modeling are not rivals. They're different instruments measuring the same concert from different seats in the hall.

Attribution sits close to the stage; it can tell you every note that was played and who played it. But it can overlook how the room's acoustics shaped the experience.

Mixed media modeling sits in the back row; it can't identify individual musicians, but it can tell you how the venue affected the audience's experience and whether the band should book this room again next year.

For most B2B marketers, especially those optimizing quarterly campaigns and defending budget decisions, attribution is the first place to invest. Make it account-level, connect it to revenue, and stop optimizing for MQLs that don't close.

As you scale and your channel mix grows to include brand, events, and offline, MMM becomes a genuinely useful layer, not because attribution stopped working, but because the questions you're asking get bigger.

And if anyone asks you in the budget meeting, which half of your marketing spend is working?

With both models running, you can finally smile and give them an actual answer instead of quietly updating your LinkedIn profile.

If you're building out account-level attribution for your B2B marketing program and want to see how Factors.ai connects ad spend to pipeline, book a demo, and we'll show you exactly what your current setup is missing.

FAQs for Attribution vs Media Mix Modeling

Q1. What is the main difference between Attribution and MMM?

Attribution tracks individual user/account journeys to assign credit to specific touchpoints. MMM uses aggregate historical data and statistical regression to estimate the impact of entire channels on revenue without tracking individuals.

Q2. Is MMM better than Multi-Touch Attribution for B2B?

Not necessarily. MMM is better for high-level budget planning and capturing non-digital influence, but MTA is superior for real-time campaign optimization and understanding specific account engagement.

Q3. Why is Media Mix Modeling becoming popular again?

The decline of third-party cookies, iOS privacy changes (ATT), and the rise of "Dark Social" have made individual-level tracking (Attribution) less accurate. MMM is privacy-safe because it uses aggregate data.

Q4. How do you reconcile the different numbers between multi-touch attribution and media mix modeling?

You shouldn't expect them to match. MTA measures "trackable intent," while MMM measures "total contribution." The gap between them usually represents your brand’s "halo effect" and non-trackable word-of-mouth.

See how Factors can 2x your ROI

Boost your LinkedIn ROI in no time using data-driven insights

See Factors in action.

Schedule a personalized demo or sign up to get started for free

LinkedIn Marketing Partner

GDPR & SOC2 Type II

.avif)

.svg)

.avif)

.svg)