Generative AI vs LLM: Understanding the Core Difference

Understand generative AI vs LLM, real differences, use cases, and how B2B marketers use both for growth, reporting, and pipeline.

TL;DR

- Generative AI is the broad category of AI systems that create new content across text, images, audio, video, and code. LLMs are one specific type of generative AI, focused primarily on language.

- The confusion between generative AI and LLMs exists because ChatGPT became the face of both concepts at the same time, while they do overlap, but they aren't identical.

- For B2B marketing teams, LLMs handle the intelligence layer: campaign summaries, email drafts, intent signal analysis, and reporting. Broader generative AI tools handle the creative layer: ad visuals, video explainers, and landing page mockups.

- Mid-market B2B teams often benefit faster from these tools than enterprises because they can move without months of procurement and governance review.

You know that one person who calls every sneaker “Nike”?

Even if it’s Adidas. Or Puma. Or something completely different. At some point, you stop correcting them, but also… you know the difference matters.

That’s basically what’s happening with AI in most B2B teams right now.

“Generative AI.”

“LLMs.”

“AI agents.”

“Automation.”

All getting lumped together like they’re the same thing, when they’re actually doing very different jobs under the hood. And here’s where it starts to pinch a little.

Because when teams blur the line between generative AI vs LLM, they misdesign systems. They expect a chatbot to behave like a workflow engine… a content generator to understand pipeline context… and buy tools thinking they’re getting intelligence, when what they’re really getting is output.

It’s like hiring a brilliant copywriter and then being surprised they can’t run your CRM (I mean, hello?!)

The problem is not the tech actually… it’s the mental model.

Most teams are trying to plug “AI” into their stack without deciding what role it’s supposed to play. Is it there to generate? To reason? To act? To orchestrate? Those are different layers, and collapsing them into one idea is how you end up with systems that look good in a demo but fall apart in real workflows.

So let’s get to it in a way that actually helps.

Generative AI vs LLM: the one clear answer you need first

I'll start with the answer, because you shouldn't have to scroll through six paragraphs of context before reaching the point.

- Generative AI is the broader category. It refers to any AI system designed to create new outputs from prompts or instructions. Those outputs can be text, images, audio, video, code, 3D assets, or synthetic data. If an AI system is producing something that didn't exist before, it falls under the generative AI umbrella.

- LLMs, or large language models, are one specific type of generative AI. They're built to understand and generate human language. Think writing, summarizing, classifying, reasoning, and conversation. Every LLM is a form of generative AI, but not every generative AI system is an LLM.

Here's the analogy that might help a little more… generative AI is the umbrella. LLMs are one very well-known section standing under it. Image generators, music tools, and video creation systems are other sections under that same umbrella, each handling a different type of output.

The reason confusion runs so deep is this: ChatGPT became the public face of AI practically overnight, and it happens to be both an LLM and a generative AI product. People started using "GenAI" and "LLM" as synonyms because their first encounter with either concept was the same product. The terms overlap in that specific case, but they aren't identical in meaning. Understanding that distinction changes how you evaluate tools, allocate budget, and think about what AI can actually do for your team beyond writing blog posts.

What is generative AI, really?

Generative AI describes a class of AI systems that produce net-new outputs from prompts, instructions, or input data. The keyword is "generative." These systems don't just analyze existing information or categorize it into buckets. They create something new based on patterns they've learned during training.

What makes the category interesting is its range. Generative AI spans multiple modalities, which is a fancy way of saying it works across many different types of output. Text is the modality most people think of first, but it's only one piece of the picture. Image generation, audio synthesis, video creation, code generation, and even 3D asset production all fall under the generative AI umbrella. Each modality typically relies on different underlying architectures and training approaches.

To make this concrete, here are a few examples across modalities. ChatGPT and Claude generate text. DALL·E and Midjourney generate images. Sora and Runway produce video content. Tools like Suno generate music. GitHub Copilot generates code. Each of these is a generative AI system, but they use very different technical foundations to do their work.

If you look under the hood,, these systems rely on different model architectures depending on what they're producing. Text generation typically uses transformer-based models (which is the architecture behind LLMs). Image generation often uses diffusion models, which learn to create images by gradually refining noise into coherent visuals. Earlier image tools used GANs, or generative adversarial networks, where two neural networks essentially compete with each other to produce realistic outputs. The architecture varies, but the principle stays constant: the system generates something new rather than simply retrieving or classifying existing information.

This is where the Gen AI vs LLM distinction becomes practically useful. When someone on your team says "we need generative AI," the right follow-up question is always about the output type. Do you need generated text? Generated images? Generated video? The answer determines which subset of generative AI you actually need, and that changes the tooling conversation entirely. A team looking for ad creative generation needs a different tool (and budget) than a team looking for automated campaign reporting summaries.

What is an LLM?

A large language model is an AI model trained on enormous text datasets to understand and generate human language. The "large" in LLM refers to both the size of the training data and the number of parameters (adjustable values) within the model. These models learn the statistical relationships between words, phrases, and concepts at a scale that allows them to produce remarkably fluent, contextual language output.

What LLMs are genuinely good at is a longer list than most people expect.

- The obvious capability is writing, whether that's drafting emails, generating blog outlines, or producing ad copy variants.

- LLMs also excel at summarization, pulling key points from lengthy documents or transcripts.

- They handle information extraction well, identifying specific data points within unstructured text.

- Can assist with coding, suggest SQL queries, classify text into categories, answer questions based on provided context, and reason through multi-step problems involving language.

That versatility is exactly why they've become the default AI tool for so many knowledge workers.

The world of LLMs has also grown immensely, and the names worth knowing have shifted even in the past year. The GPT family from OpenAI remains the most widely recognized.

- Anthropic's Claude has earned a strong reputation for nuanced reasoning and longer context handling.

- Google's Gemini has pushed into multimodal territory, handling both text and visual inputs.

- Meta's Llama models have become the go-to choice for teams that want open-source flexibility.

Each model has its own strengths, but they all share the core LLM architecture: transformer-based, trained on massive text corpora, designed to work with language.

One important nuance that keeps blurring the boundaries: modern LLMs are increasingly multimodal. GPT-4 and Gemini can process images alongside text. Some models can generate or interpret audio. This means the clean line between "LLMs handle language" and "other generative AI handles everything else" is getting fuzzier with every major model release. For practical purposes, though, the core strength of LLMs remains language. They're at their best when the input is text, the output is text, and the task involves understanding, reasoning, or generating language.

The major difference between Generative AI and LLMs

The difference between LLM and generative AI is essentially a category-versus-member relationship. Generative AI is the broad field. LLMs are one specific type within it. Comparing them directly is a bit like comparing "vehicles" to "sedans." Every sedan is a vehicle, but not every vehicle is a sedan. Every LLM is generative AI, but not every generative AI system is an LLM.

Here's a comparison table that lays out the practical distinctions:

The table is useful for quick reference, but true understanding comes from considering specific scenarios. If someone on your team asks for a logo concept or a set of ad visuals for a LinkedIn campaign, an image-generation model (a broader generative AI model) is the better fit. If someone needs a pipeline analysis summary or a first draft of a nurture email sequence, an LLM is usually the stronger choice. The right tool depends on the output type and the task, not on which term sounds more impressive in a strategy deck.

Where things get a tad confusing is in multi-step workflows… a content repurposing workflow might use an LLM to summarize a webinar transcript, then pass that summary to an image generation tool to create social media visuals. Both are generative AI. Only one is an LLM. The workflow uses both, and that's increasingly how real teams operate. The distinction between LLM vs generative AI isn't about choosing one. It's about knowing which component does what within a larger process.

One more thing worth noting. When people casually debate AI vs. LLM, they're usually conflating three nested categories. AI is the broadest term, covering everything from rule-based systems to machine learning to deep learning. Generative AI is a subset of AI. LLMs are a subset of generative AI. Keeping those layers straight prevents a lot of confused conversations in planning meetings.

Generative AI vs LLM for B2B marketing teams

Now let's get specific about where this distinction actually shows up in your day-to-day workflows. Because for most B2B marketing teams, the question isn't "what's the theoretical difference?" It's "which tool do I reach for when I have a specific job to do?"

Where LLMs win for B2B teams

LLMs shine when the task involves language, analysis, or reasoning over text. For B2B marketers, that covers a surprisingly large portion of the workload that eats up your week.

- Campaign summaries

Instead of manually pulling insights from ad platform dashboards and writing a narrative for your leadership team, an LLM connected to your data can generate a first draft that highlights spend, performance trends, and anomalies. You still review and refine it, but the initial synthesis goes from 90 minutes to 10.

- Ad copy variants

Feed an LLM your value proposition, target persona, and a few constraints, and you'll get a dozen variations to test. The quality of those variants depends heavily on how good your prompt and context are, but the speed advantage is undeniable compared to writing each version from scratch.

- SDR email drafts (speed advantage)

When your sales development team needs personalized outreach for a list of accounts, an LLM can generate first drafts tailored to each company's industry, size, and likely pain points. The SDR still needs to review and edit, but the starting point is dramatically better than a blank email.

- CRM note summarisation

Sales reps leave notes in varying formats and levels of detail. An LLM can synthesize weeks of scattered CRM notes into a coherent account summary before a quarterly review or handoff conversation. That alone can save hours of prep time across a team.

- Attribution explanation

Most attribution data lives in dashboards that show numbers but don't explain them. An LLM can take that data and produce a plain-English narrative: "LinkedIn campaigns contributed to 34% of pipeline this quarter, with the strongest influence coming from the product demo ads targeting mid-market accounts." That translation from data to story is exactly what LLMs do well.

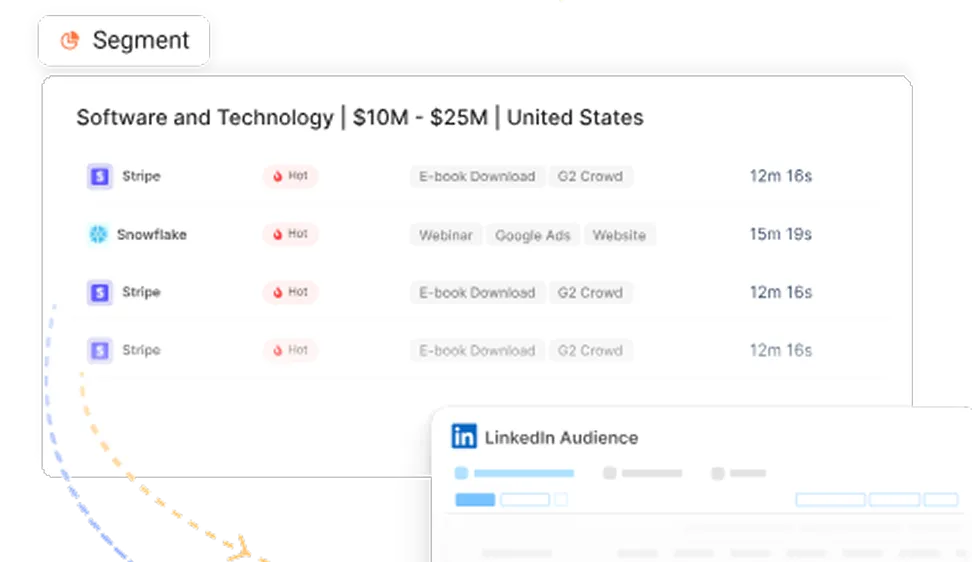

- Website visitor intent summaries

When your analytics platform identifies anonymous companies visiting specific pages, an LLM can cluster those signals and produce a summary: "These 12 accounts are showing research-stage behavior around your integration features." That kind of synthesis turns raw data into something your demand gen team can actually act on.

Where broader generative AI wins

Broader generative AI tools earn their place when the output isn't text. For B2B teams, that typically means creative production tasks that used to require a designer, a video editor, or a significant agency budget.

Ad creatives are the most common entry point. Image generation tools can produce visual concepts for LinkedIn carousel ads, display banners, and social media posts. The quality has improved enough that many mid-market teams use AI-generated visuals for A/B testing before investing in polished designs for the winners.

Video explainers have become remarkably accessible. Tools that generate short video content from scripts and visual prompts can produce product overview videos, feature announcement clips, and even short customer story summaries. The output isn't replacing a full production team, but it's filling a gap that many B2B companies simply left empty before because video was too expensive or slow to produce.

Product demo voiceovers, sales deck visuals, and landing page mockups all follow a similar pattern. Generative AI tools handle the first draft or the initial concept, which means your creative team spends less time starting from zero and more time refining and polishing.

Webinar snippets are a great example of how LLMs and broader generative AI work together. An LLM transcribes and summarises the webinar. A video generation tool cuts highlight clips. An image tool creates promotional visuals. The LLM drafts social copy to accompany each clip. That's a multi-tool workflow where both categories of generative AI contribute to the end result.

The honest take on what B2B teams actually need

Here's the thing I keep coming back to when teams ask about this comparison. Most B2B teams don't need "AI" in some grand, transformative sense. They need fewer bottlenecks. They need the campaign summary to not take a full morning. They need the email sequence to not sit in a backlog for two weeks. They need the webinar recap to not require three meetings to plan.

LLMs and broader generative AI tools both serve that goal, just for different types of output. The teams getting real value aren't debating generative AI LLM definitions in Slack threads. They're identifying their slowest workflows and testing whether an AI tool can cut the turnaround time in half. That's a much more productive starting point than any theoretical comparison.

How does Factors.ai use LLMs and Generative AI thinking?

I want to ground this conversation in something practical, because theoretical distinctions only matter if they change what you actually do. Factors.ai sits at the intersection of revenue data and marketing intelligence, which means both LLMs and broader generative AI thinking show up in how the platform helps B2B teams work.

- Revenue reporting that answers questions in plain English

One of the most frustrating parts of B2B marketing is the gap between having data and understanding data. Most teams have plenty of numbers. They have pipeline figures, campaign spend data, attribution percentages, and conversion rates spread across multiple platforms. What they don't have is a fast way to turn those numbers into answers.

An LLM connected to CRM and ad platform data can bridge that gap. Instead of building a custom dashboard view to answer "which LinkedIn campaigns influenced pipeline last month," you can ask the question in natural language and get a synthesized response. The same goes for questions like "which accounts are surging in engagement this quarter" or "where are we overspending relative to pipeline contribution." The LLM doesn't replace the data. It makes the data conversational and accessible to people who don't want to build pivot tables at 4pm on a Thursday.

That shift matters more than it might seem on the surface. When reporting becomes something you can query rather than something you have to build, the frequency of insight goes up. Teams start asking better questions because the cost of asking is lower.

- Demand gen acceleration through intent signal analysis

Demand generation in B2B involves a lot of signal interpretation. Which accounts are showing buying intent? What stage of the journey are they in? What should the next outreach step be? These questions require synthesizing data from multiple sources, and that synthesis is exactly where LLMs add value.

An LLM can summarize intent signals across website visits, content downloads, ad engagement, and third-party data to produce account-level intelligence. It can cluster accounts by likely buying stage, separating early-research accounts from those showing evaluation behavior. It can even recommend next-best actions based on what's worked for similar accounts in the past.

That said, none of this can replace a demand gen strategist's judgment. What it does is compress the time between "we have signals" and "we have a plan." The strategist still decides what to do, but they're working from a synthesized brief instead of raw data exports.

- The content engine: one asset becomes many

This is where LLMs and generative AI thinking converge most naturally. Every B2B team has a content multiplication problem. You produce a webinar, and it should become a blog post, a set of LinkedIn posts, ad copy, an email nurture sequence, and a few sales enablement snippets. In practice, that repurposing often stalls because someone has to sit down and manually create each derivative asset.

A generative AI workflow can turn that one webinar into all of those assets. The LLM handles the text-based outputs: blog draft, social copy, email drafts, and enablement summaries. Image and video tools handle the visual outputs: social graphics, short video clips, and presentation visuals. The quality still needs human review and editing, but the zero-to-first-draft stage that used to take days gets compressed into hours.

The real advantage in all of this isn't the AI output itself. Honestly, first drafts from AI tools are not really publish-ready without significant editing. The advantage is operational speed with context. When your AI tools are connected to your actual revenue data, campaign performance, and account intelligence, the output starts in a much better place than a generic prompt ever could. Context turns a mediocre AI draft into a useful starting point, and that's the difference between a tool you actually adopt and one that sits unused after the first month of excitement fades.

Common myths B2B teams still believe about generative AI and LLMs

I've had enough conversations with marketing leaders to know there's a set of misconceptions that keeps circulating, regardless of how much the technology evolves. These myths aren't harmless. They shape buying decisions, set wrong expectations, and sometimes prevent teams from getting value they could have accessed months earlier.

Myth 1: LLM and generative AI are the same thing

This is the myth this entire article exists to address, so I won't belabor it. LLMs are a subset of generative AI, not a synonym for it. The confusion comes from ChatGPT being the most visible example of both categories simultaneously. Knowing the difference matters because it changes which tools you evaluate and what you expect them to produce. A team that thinks "generative AI" only means text generation will miss opportunities in creative production, video, and design workflows. A team that thinks "LLM" means any AI that creates things will be confused when their text model can't generate the LinkedIn ad banner they were hoping for.

Myth 2: LLMs are going to replace marketers

This one has been floating around since GPT-3, and it still generates anxiety in every team I talk to. The reality is more nuanced and, frankly, less dramatic. LLMs are exceptionally good at removing administrative work. Drafting, summarising, formatting, synthesising data into narratives, producing first versions of repetitive content. These tasks consume a shocking amount of a marketer's week, and LLMs can compress them significantly.

What LLMs can't do is the strategic work. They can't decide which market segment to prioritise next quarter. They can't sense that a competitor's positioning shift requires a messaging overhaul. They can't read the room in a pipeline review meeting and adjust the narrative on the fly. Strategy, judgment, and relationship intelligence remain firmly human skills. The marketers who thrive alongside LLMs will be those who let the tools handle the production layer while they focus on the decision layer.

Myth 3: Bigger model means better ROI

There's an understandable assumption that the most powerful, most parameter-heavy model must be the best choice for any task. In practice, workflow design matters far more than raw model size for most B2B marketing applications. A smaller, faster model with good prompts, proper context, and a well-designed workflow will often outperform a massive model used with generic prompts and no integration into your actual data.

I've seen teams spend months evaluating the "best" LLM when the bottleneck was never the model. It was the lack of a clear workflow connecting the model to their CRM data, their campaign assets, and their reporting cadence. The model is the engine, but without the chassis, the wheels and someone who knows where to drive, the engine just revs in place.

Myth 4: Only enterprises can benefit from generative AI

This one actively frustrates me, because I've watched mid-market B2B teams move faster with these tools than many enterprise organisations. The reason is simple: mid-market teams have shorter procurement cycles, fewer layers of approval, and less legacy infrastructure to integrate around. An enterprise might spend six months on an AI governance review before anyone touches a tool. A 200-person B2B company can test an LLM workflow in a week and have it running in production by the end of the month.

The ROI argument actually favours smaller teams in many cases. When you have a lean marketing team wearing multiple hats, the time saved by automating reporting summaries, content drafts, and data synthesis directly translates to capacity for strategic work. That trade-off is more impactful per person on a small team than on a 50-person marketing department where the tasks were already distributed across specialists.

Which one should your business invest in?

Instead of giving a vague "it depends," here's a decision framework you can actually use in your next planning conversation.

- If your primary pain point is writing, reporting, or research, lean into LLM-led workflows. This covers campaign performance summaries, SDR email generation, CRM data synthesis, content drafting, and any task where the input and output are primarily language. The tools are mature, the integration options are growing, and the time savings are measurable within weeks.

- If your primary pain point is creative production, invest in broader generative AI tools. This covers ad visual creation, video content, presentation design, landing page mockups, and any workflow where the output is visual or multimedia. These tools are improving rapidly, and they're closing the gap between "AI-generated draft" and "actually usable creative asset" faster than most people expected.

- If your primary pain point is growth efficiency, use both. And honestly, this is where most B2B teams land once they've spent a few months experimenting. The teams generating the most value aren't treating this as a binary choice. They're building workflows where LLMs handle the intelligence and language layer while broader generative AI tools handle the creative and multimedia layer. A single webinar becomes a full content package. A quarterly review becomes a data-enriched narrative. A target account list becomes a personalized outreach sequence with matching visuals.

The decision is about identifying your highest-friction workflows and matching the right tool type to each one. Start with the workflow that costs your team the most time relative to its value, test an AI tool against it, measure the improvement, and expand from there. That's more productive than any grand AI strategy document.

One more thought on this. The smartest companies heading into 2026 aren't spending their energy debating LLM vs generative ai as an either-or question. They're combining both into workflows tied directly to revenue outcomes. Pipeline influenced, deals accelerated, content velocity increased, reporting cycles shortened. When you frame AI investment in terms of revenue impact rather than technology categories, the distinction between generative AI and LLMs becomes a practical architecture decision rather than a philosophical debate.

In a nutshell

Here's what this all comes down to, in terms you can actually take into your next team conversation.

Generative AI is the broad category of AI systems that create new content. It spans text, images, audio, video, code, and more. LLMs are a specific, language-focused subset within that category. They're built for writing, summarising, reasoning, and any task that involves understanding or generating text. Every LLM is generative AI, but most generative AI systems aren't LLMs.

For B2B marketing teams, LLMs cover the intelligence layer: campaign reporting, email drafts, intent signal summaries, CRM synthesis, and data-to-narrative translation. Broader generative AI tools cover the creative layer: ad visuals, video content, presentation mockups, and multimedia production. The most effective teams aren't choosing between these categories. They're building workflows that use both, connecting language models to their revenue data while using creative AI tools to scale their content production.

The practical starting point is to audit your slowest, most repetitive workflows. Identify whether each one is primarily a language task or a creative production task. Match the right tool type to each. Test it for two weeks, measure time saved and output quality, and expand from there. Skip the grand AI strategy. Start with one workflow that's costing you time, fix it, and let the results build momentum for everything that follows.

Frequently asked questions about generative AI vs LLM

Q1. Is ChatGPT generative AI or an LLM?

It's both. ChatGPT uses a large language model architecture (specifically, the GPT family of models) as its core technology. At the same time, it sits firmly within the generative AI category because it creates new text outputs from prompts. The reason people often confuse the two terms is that ChatGPT was their first encounter with both concepts simultaneously.

Q2. What is the main difference between LLM and generative AI?

An LLM is a specific type of AI model focused on language tasks: writing, summarization, reasoning, classification, and conversation. Generative AI is the broader category that includes LLMs alongside image generators, video tools, music creation systems, and any other AI that produces new content. The relationship is a subset one. All LLMs are generative AI, but generative AI includes many systems that aren't LLMs.

Q3. Is generative AI better than an LLM?

This isn't a useful comparison because they aren't competing alternatives. Generative AI is a category, and LLM is one model type within that category. Asking whether generative AI is "better" than an LLM is like asking whether "vehicles" are better than "trucks." The answer depends entirely on your specific task. If you need text-based output, an LLM is your tool. If you need image, video, or audio output, you'll reach for a different type of generative AI.

Q4. Can B2B marketers use both LLMs and generative AI?

Absolutely, and most effective teams already do. LLMs handle the intelligence and writing tasks: drafting content, summarizing campaign data, generating email sequences, and synthesizing account signals. Broader generative AI tools handle creative assets: ad visuals, video clips, presentation graphics, and landing page mockups. A typical workflow might use an LLM to summarize a webinar transcript and then use image and video tools to create promotional assets from that summary.

Q5. Is AI vs LLM the same comparison as generative AI vs LLM?

No, these are different comparisons because AI, generative AI, and LLM exist at three different levels. AI is the broadest category, encompassing everything from simple rule-based automation to machine learning to deep learning. Generative AI is a subset of AI, specifically the portion that creates new content. LLMs are a subset of generative AI, specifically focused on language. When someone asks about AI vs. LLM, they're comparing a massive field to one specific branch within it, which makes the comparison far wider than generative AI vs LLM.

See how Factors can 2x your ROI

Boost your LinkedIn ROI in no time using data-driven insights

See Factors in action.

Schedule a personalized demo or sign up to get started for free

LinkedIn Marketing Partner

GDPR & SOC2 Type II

.avif)

.svg)

.avif)

.svg)